QuestSage - Multi-Agent Research Synthesis System

QuestSage is a multi-agent AI system that transforms complex research queries into actionable insights by orchestrating specialized AI agents in collaborative dialogues. The system simultaneously queries arXiv for academic papers, Google Search for industry insights, Reddit for community discussions, and Perplexity AI for deep analysis, then synthesizes findings into evidence-backed reports.

Each agent brings distinct reasoning capabilities: ChatGPT excels at pattern recognition, Gemini provides strategic analysis, and Claude delivers high-quality synthesis. By having multiple agents engage in structured dialogue rather than relying on a single model, QuestSage achieves more nuanced and well-rounded research synthesis.

The system is supports multiple AI providers (OpenAI, Google Gemini, Anthropic Claude, Perplexity) with intelligent rate limiting and comprehensive testing. It’s particularly valuable for academic research, business intelligence, and decision support where synthesizing information from multiple sources is critical.

See the GitHub repository for implementation details and documentation.

Chatbot Memory with Web Search - Reasoning-Powered AI Assistant

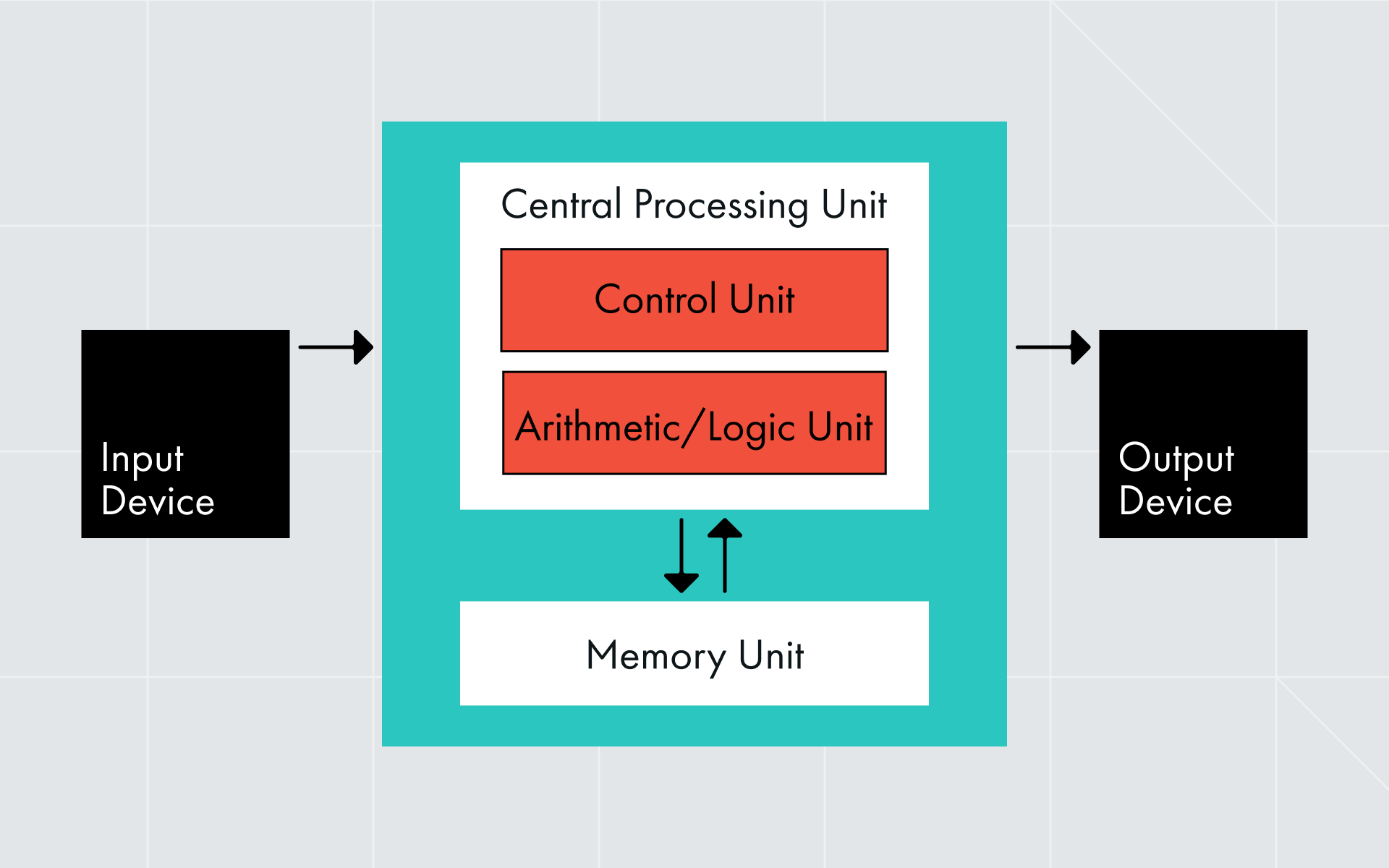

This project was inspired by the von-Neumann architecture, which separates computation, memory, and I/O into distinct components. In LLM systems, we see a striking parallel: the language model serves as the processor (executing reasoning and computation), semantic memory acts as the storage system (persisting information across interactions), and tool calls function as I/O operations (interacting with external systems like web search).

A production-ready chatbot system that combines GPT-OSS reasoning capabilities with persistent semantic memory and real-time web search. The system demonstrates how to build an AI assistant that can remember information across conversations and search the web when needed, all powered by a reasoning-capable language model.

The architecture features a unified server that runs both GPT-OSS-20B and Qwen/Qwen3-Embedding-8B models, pre-loaded at startup for minimal latency. Semantic memory uses FAISS-based vector storage with embeddings generated via HTTP API, enabling zero query-time latency. The web search tool leverages DuckDuckGo with robust rate limiting, caching, and error handling.

The interactive Gradio-based chat UI provides real-time streaming responses, automatic tool usage (web search and memory), reasoning chain visualization, and persistent conversation history. The system supports configurable reasoning levels (low, medium, high), temperature and top-p sampling controls, and both streaming and non-streaming modes.

This project showcases practical techniques for building production AI systems with memory, tool calling, and multi-modal capabilities, making it valuable for developers building conversational AI applications.

See the GitHub repository for implementation details, examples, and documentation.

Building Autoraters for Expert-Level Reasoning Data

We developed a multi-agent LLM system that uses model debate to automatically evaluate expert-level reasoning data, achieving a 4x improvement in error detection (23% to 82%) compared to single-model approaches. The system deploys two LLM debaters that independently solve problems and engage in structured debate, with a third LLM judge providing the final verdict.

When integrated into production pipelines, the autorater reduces human errors slipping through final review from 9% to 1%. As a live feedback copilot during data labeling, it helps contributors achieve 87% improvement in correctness. The system works with both closed-source and open-source models, enabling deployment on private infrastructure for data confidentiality.

This work demonstrates how multi-agent collaboration can achieve scalable oversight of advanced AI systems, providing a robust foundation for building more capable and trustworthy models. The approach is particularly valuable for frontier model training where data quality directly impacts model capabilities and safety.

For detailed methodology and results, see the blog post.